- Have an account on LangSmith and keep the environment variables in handy

- Set the environment variables in your app so that EmbedJs has context about it.

- Just use EmbedJs and everything will be logged to LangSmith, so that you can better test and monitor your application.

- First make sure that you have created a LangSmith account and have all the necessary variables handy. LangSmith has a good documentation on how to get started with their service.

- Once you have setup the account, we will need the following environment variables

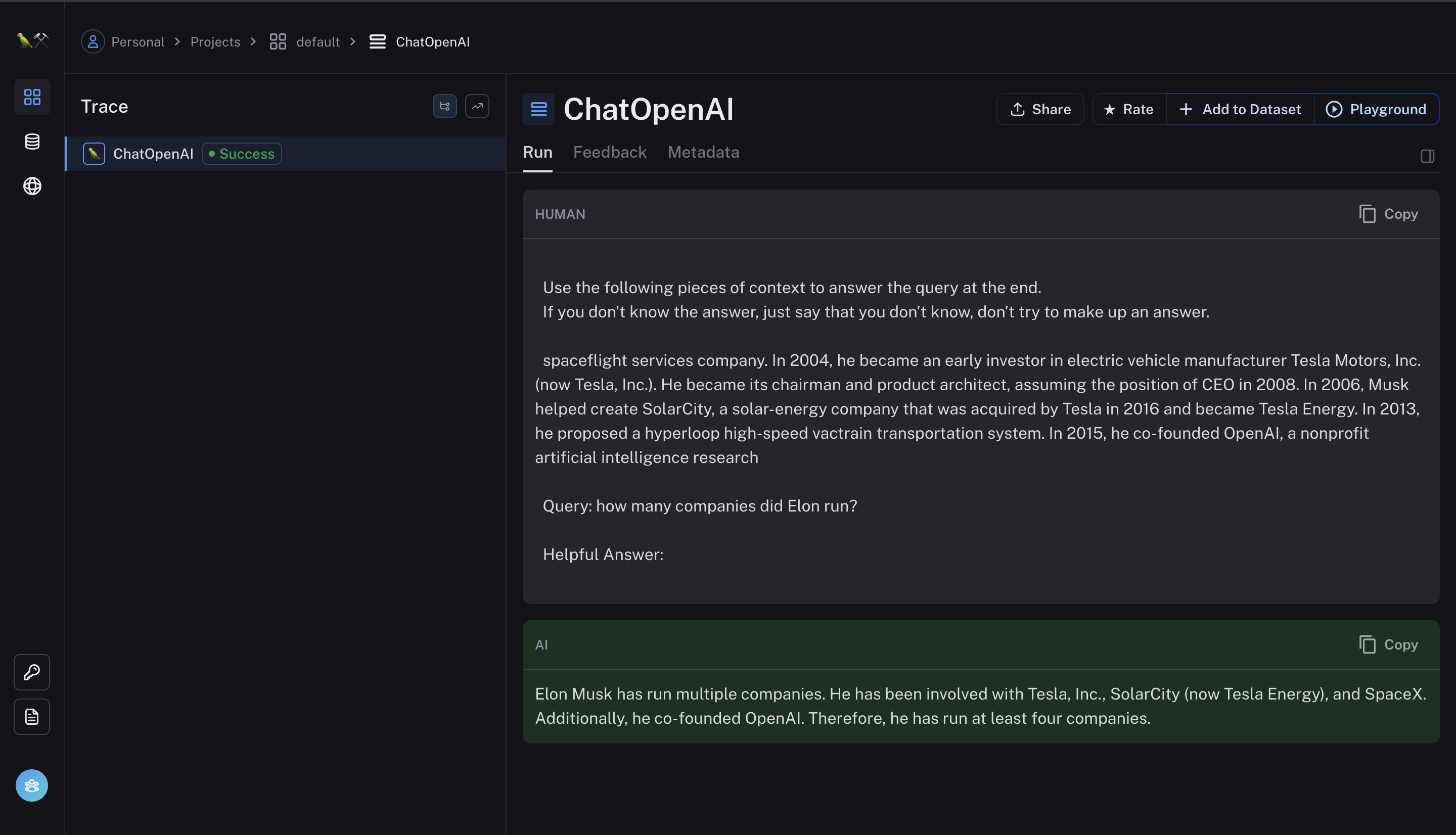

- Now create an app using EmbedJs and everything will be automatically visible in LangSmith automatically.

- Now the entire log for this will be visible in langsmith.